Several users of Microsoft's Bing Image Creator have reported instances of erroneous content violation notifications. Using seemingly harmless phrases such as “man breaks server rack with a sledgehammer,” and “a cat with a cowboy hat and boots,” users have reported receiving content violation messages. The issue was first documented in an article by Windows Central, and evidence of its pervasiveness was further highlighted by subsequent reports from various Reddit users.

Microsoft Responds to Violation Message Concerns

Microsoft, upon being made aware of the problem, has responded to the issue. The company's Windows frontrunner, Mikhail Parakhin, when questioned via X (formerly known as Twitter) on the errant content messages, admitted the peculiarity and confirmed that the issue is being inspected. Parakhin's concise response to the discussion was “Hmm. This is weird – checking.”

ing Image Creator: A History of Content Restriction Issues

This issue is far from the first time Microsoft's Bing Image Creator has experienced difficulties related to content regulation. During the app's launch in March, the AI art maker generate violation messages for inputs as innocuous as the term “Bing”. At the time of the launch, Mr. Parakhin noted that the excessive content restrictiveness was purposefully designed to err on the side of caution. However, given the current situation, it is suggested that the recent excessive censorship is unintended, and a fix is expected to follow shortly.

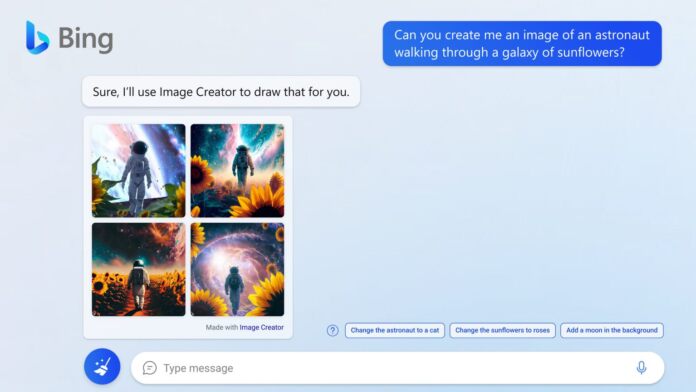

Microsoft has recently integrated OpenAI's DALL-E 3 model into Bing Image Creator, which led to surge in users attempting to try the service. Consequently, this resulted in a major slowdown in image creation, prompting the tech giant to invest in additional GPUs for its data centers to accelerate the process. Current content flagging issue arose soon after this update, indicating potential correlation. However, detailed clarification from Microsoft is awaited.