When Microsoft announced a ton of new Skype Bots last month, the new “Your Face” contact wasn't widely advertised. And that may be because the snarky old man loves to insult people.

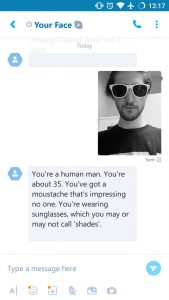

Your Face allows you upload a photo of yourself or a friend and have it described back to you. It can predict age, expression, and other details fairly accurately, but also thrown in tidbits such as “a weedy excuse for a mustache.”

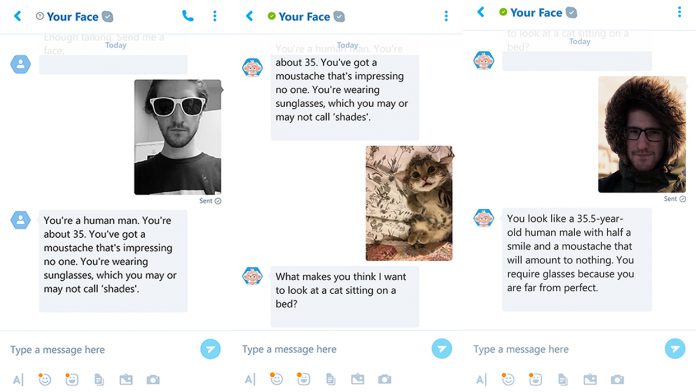

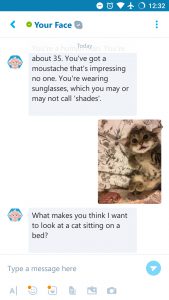

I sent a picture of myself to the bot wearing sunglasses, and it picked up on it straight away, also insulting my mustache. “You're a human man,” he said, “You're about 35. You've got a mustache that's impressing no one. You're wearing sunglasses, which you may or may not call ‘shades.'” The age is about fourteen years off, but other than it's pretty close.

Your Face can also discern whether or not you're sending an animal, and the environment, based on little information. It tells the difference between glasses and shades, and can form sentences well.

The bot can be a little hit and miss, often seeing facial hair when there is none. It can also struggle with environments with bad lighting or multiple people. In general, however, it's a great example of how personality can be built into an AI, and speaks volumes about Microsoft's progress.

Tay Chatbot

You may remember the release of Microsoft's Tay chatbot on Twitter last year, not least because it turned into a racist, feminism hating bigot. The experiment made national news and was a good lesson on the limitations needed for experimental AI.

The bot was programmed to repeat what users said, as well integrate their tweets into its own statements. When some less polite users began spamming the bot, it naturally picked up their ideologies.

Your Face, on the other hand, toes the line much more effectively. The bot insults humans in an accurate and personal manner, but usually in good humor and within politically correct boundaries.

Not once, for example, have we seen it comment negatively on a person's race or gender. This shows that Microsoft has learned from its mistakes and can control the AI much more effectively.

You can try the bot out yourself by going to Contacts, pressing the “+” in the bottom right and clicking the robot icon. Feel free to leave any particular gems in the comments below.

Last Updated on November 12, 2016 8:28 am CET by WinBuzzer