Facial recognition is a technology that is here to stay. At the moment, many are unclear how the tech will evolve and if regulations will be focused on it. We have reported in the past about Microsoft's internal struggle with the morals of facial technology. Still, development continues apace.

Japanese company Fujitsu says it has created a solution that allows facial rec to pickup emotions more efficiently. Researchers for the company have leverage AI to track subtle movements in expression on a user face. With the ability, facial recognition can pick up emotions such as confusion.

In the past, technology has been limited to the eight main emotions: fear, anger, disgust, contempt, happiness, sadness, neutral, and surprise.

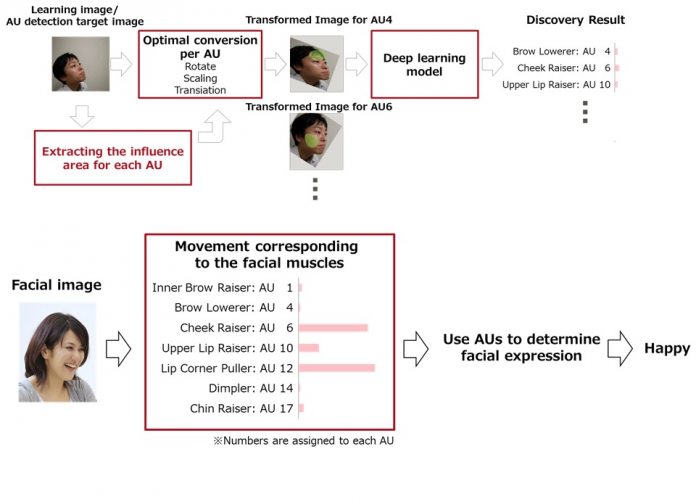

Fujitsu's new technology can detect more micro emotions. This is achieved by identifying action units (AUs), which are small facial muscle movements when emotions are triggered. Speaking to ZDNet, Fujitsu explained how its technology moves beyond current solutions:

“The issue with the current technology is that the AI needs to be trained on huge datasets for each AU. It needs to know how to recognise an AU from all possible angles and positions. But we don't have enough images for that – so usually, it is not that accurate.”

Changing the AI Learning Process

One of the problems facial recognition technology has had with detecting subtle emotions is the AI models. Huge data sets are needed to train AI to detect emotions. Fujitsu says it has overcome this problem by developing a tool that can take more information from a single image instead of training AI across more images.

The company calls this a “normalization process” that can convert images set at angles into a front-facing photo.

“With the same limited dataset, we can better detect more AUs, even in pictures taken from an oblique angle, for example,” said Fujitsu's spokesperson. “And with more AUs, we can identify complex emotions, which are more subtle than the core expressions currently analysed.”