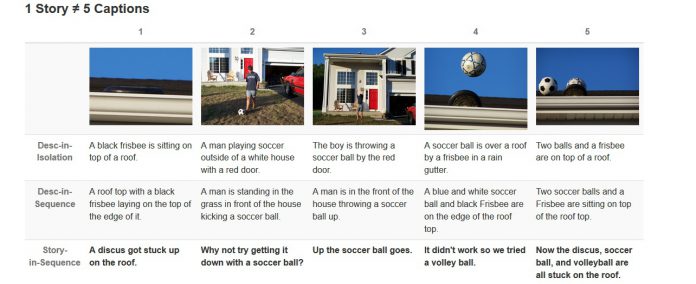

The latest AI project, Microsoft Sequential Image Narrative Dataset (SIND), from Microsoft is a software that can read images and place captions in a story board.

Microsoft has showed with the success of HoloLens and the short lived failure of the Tay chatbot that the company is interested in future technology.

Artificial intelligence is of particular interest to research teams working for Microsoft, and the company has published an academic paper that details its latest AI breakthrough.

The new software can tell a story across multiple images, which the company says could be important for helping the visually impaired.

While software that identifies objects in images to create captions is hardly new, Microsoft says its AI goes further and correlates the details into “stories”.

Microsoft researchers first had to sit people down and have them manually write captions for individual photos, and also placing captions in order to create stories for a run of images. The information gathered in this process allowed computer engineers to teach machines how to create captioned stories for images automatically.

“It's still hard to evaluate, but minimally you want to get the most important things in a dimension. With storytelling, a lot more that comes in is about what the background is and what sort of stuff might have been happening around the event,” Microsoft researcher Margaret Mitchell told VentureBeat in an interview.

The artificial intelligence uses deep learning, something Microsoft has previously used in its speech recognition and translation technology.

“Here, what we're doing is we're saying that every image is fed through a convolutional network to provide one part of the sequence, and you can go over the sequence to create a general encoding of a sequence of images, and then from that general encoding, we can decode out to the story,” said Mitchell, the principal investigator in the paper.

Late last month, we reported on Microsoft's Tay Twitter based chat bot, which made its debut on the social network with the ability to talk to users. After a day of learning to become a racist, Tay was pulled by Microsoft.

Considering the more serious nature of this latest AI, we hope it is more prepared and works a little better than Tay.