Microsoft´s Project Oxford Team announced today their plans to release public beta versions that will assist app developers on taking advantage on those capabilities, including one that can recognise emotions.

Current advances in the fields of machine learning and artificial intelligence have helped computer scientists improve the development of applications that are able to identify sounds, words, images and even facial expressions.

The head of the Microsoft Research Cambridge in the United Kingdom, Chris Bishop, presented today the emotion tool in a keynote talk at Future Decoded.

Many of the tools are used in Microsoft products and are designed for developers that don’t have machine learning or artificial intelligence expertise. They can include capabilities such as speech, vision and language understanding in their apps.

Senior program manager within Microsoft’s Technology and Research Group, Ryan Galgon said,”The exciting thing has been how much interest there is and how diverse the response is.”

Facial Recognition and Microsoft Project Oxford AI

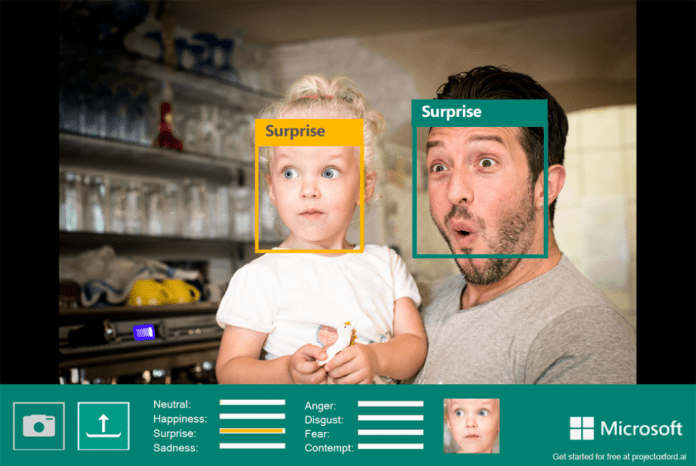

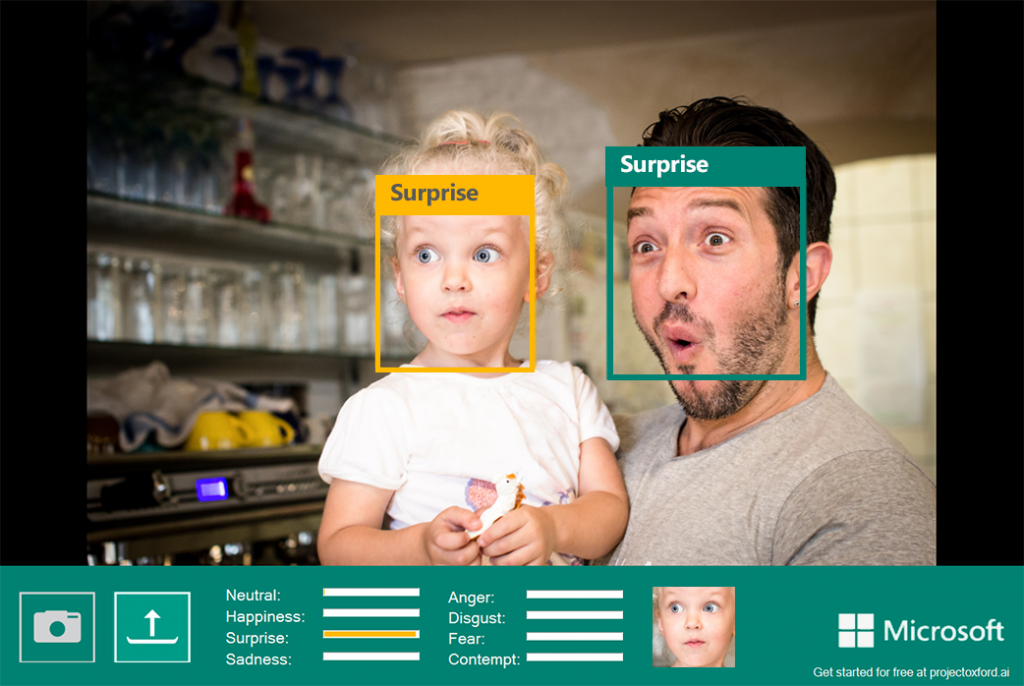

Facial recognition can recognize certain traits from a set of pictures it receives, and it can then apply that information to identify facial features in new pictures it sees. The emotional tool that is released today can recognize eight emotional states such as anger, fear, disgust, happiness, contempt, neutral, sadness, or surprise.

This feature can be used for many reasons according to Galgon, some of these are for marketers to use this tool to record people’s reaction to a store display, movie or food. This can also become valuable for creating consumer tools, such as messaging app that offers different options depending on their emotions.

The public beta version is available for developers in addition, Microsoft is releasing public beta versions of several other tools that will be released by the end of the year. These tools are available for free trial.

Other tools provided by Microsoft

Spell Check is a tool which recognizes slang words”, as well as brand names, errors that are difficult to spot, and it can also add new brand names and expressions as they start to become popular.

Video is based on the Microsoft Hyperlapse and lets customers analyze and automatically edit videos such as tracking faces, detects motion and also stabilizing shaky videos. It will be available in beta version by the end of the year.

A Speaker Recognition tool will also be available in beta version by the end of the year, and it will be able to recognize who is speaking by learning the particulars of an individual’s voice.

Custom Recognition Intelligence Services: Also known as CSIS, it makes it easier for people to customize speech recognition for challenging environments such as a noisy airport or a trainstation. CSIS can also help people that are non-native speakers or those with disabilities. This feature will be available as an invite only beta by the end of the year.

Updates to face APIs: Microsoft Project Oxford is also planning on updating for more features such as facial hair and smile predictions tools. The update will also improve visual age estimation and gender identification.

Any developers who are interested in these tools can find them on the Microsoft Project Oxford website.

Source: Microsoft