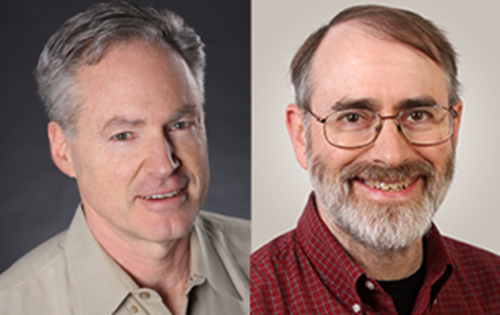

Microsoft's leading researcher, Eric Horvitz and Thomas Dietterich of Oregon State University have written a viewpoint column advising researchers to focus on the challenges coming from near-term artificial intelligence. They've also addressed their concerns about potential dystopian consequences coming in future.

In their column, the two researchers discussed about beneficial contributions of Artificial Intelligence Technology including safety from several road accidents and errors made in hospitals. They also highlighted the fact that advanced artificial technology will bring significant developments in the field of, transportation, education, and commerce as well.

However, they turned towards the worst case scenario.

“Other scenarios can be imagined in which an autonomous computer system is given access to potentially dangerous resources. The reliance on any computing systems for control in these areas is fraught with risk, but an autonomous system operating without careful human oversight and failsafe mechanisms could be especially dangerous. Such a system would not need to be particularly intelligent to pose risks.

We believe computer scientists must continue to investigate and address concerns about the possibilities of the loss of control of machine intelligence via any pathway, even if we judge the risks to be very small and far in the future. More importantly, we urge the computer science research community to focus intensively on a second class of near-term challenges for AI.

These risks are becoming salient as our society comes to rely on autonomous or semiautonomous computer systems to make high-stakes decisions. In particular, we call out five classes of risk: bugs, cybersecurity, the “Sorcerer's Apprentice,” shared autonomy, and socioeconomic impacts.”

Particularly, they classify the risks of artificial intelligence risks using five major types:

- Cybersecurity

- Bugs

- “Sorcerer's Apprentice”

- Socioeconomic impacts

- Shared autonomy.

They explained these challenges in detail, saying that “achieving the potential tremendous benefits of AI for people and society will require ongoing and vigilant attention to the near- and longer-term challenges to fielding robust and safe computing systems.”

Based on that argument Dietterich and Horvitz urge the entire computer science community to deal with potential risks associated with machine intelligence.

You can read the complete column on rising concerns about AI at Communications of the Association for Computing Machinery

Source: Microsoft Research, Communications of the ACM

Last Updated on July 6, 2016 12:18 am CEST by WinBuzzer